Microsoft MVP · Founder, VisualSP · Keynote speaker

I help mid-size Microsoft organizations make Copilot actually stick.

Not by buying more licenses or running more trainings, but by activating the culture Copilot needs to work. I’m Asif Rehmani — and this is what I do, what I write about, and what I’m being booked to talk about on stages around the world.

what i do

Three things I keep showing up for.

Speak

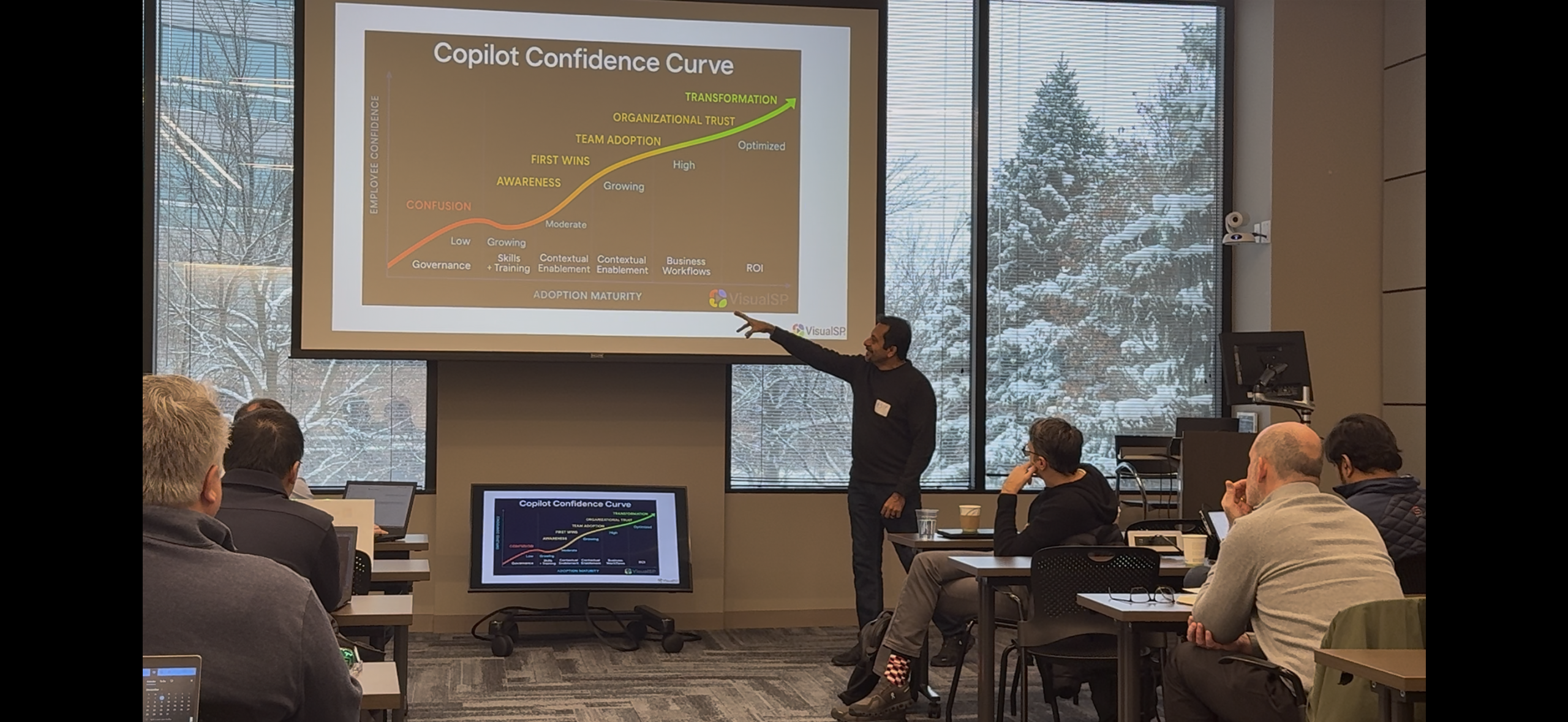

Keynotes and workshops for mid-size Microsoft organizations trying to make Copilot stick. Not theory, not hype. The culture work that turns license spend into actual results.

See talks →Build

I run VisualSP — the in-app guidance and digital adoption platform helping enterprises actually get value out of Microsoft 365, Dynamics 365, and Copilot, not just buy licenses for them.

About VisualSP →Write

An unfiltered stream of thinking on AI, work, leadership, and the messier human side of building organizations through fast change. New writing most weeks.

Read the work →the thesis

Culture Activates Copilot.

“You don’t train people on Copilot. You activate a culture for it.”

Most Copilot rollouts fail. Not because of the tech. Every mid-size Microsoft organization I work with has the same problem. They bought the licenses. They ran the trainings. The usage numbers still won’t move. The board still wants to know where the ROI went.

The fix isn’t another vendor, another pilot, or another all-hands. It’s culture work. The way decisions get made, the way curiosity gets rewarded, the way people are allowed to fail in public. Get that right and Copilot activates almost on its own. Get it wrong and no training program in the world will save it.

This is what I help organizations do. It’s what I write about. It’s what I’m being booked to talk about on stages around the world.

how I do it

The Copilot Activation Workshop.

A one- or two-day working session that turns your team from Copilot license holders into Copilot users who actually get measurable work done.

Activation, not training. Real scenarios. Your environment.

See the workshop

On stages across the country — from AI Agent & Copilot Summit to DynamicsCon, EO Chicago, 365 EduCon, and more.

talks & keynotes

What I’m being booked to talk about.

Culture Activates Copilot

Why Copilot rollouts fail in mid-size Microsoft organizations — and the culture work that makes them actually stick.

Making Copilot Stick

The adoption playbook organizations need before they roll Copilot out to thousands of people. What works, what fails, what to do Monday.

Copilot in the Real Enterprise

What’s actually happening inside real Microsoft 365 deployments — and the patterns that keep producing zero ROI.

AI Governance Without the Bureaucracy

How mid-size Microsoft organizations can put practical AI guardrails in place without strangling the speed they actually need.